Reasons Why The Truman Show Holds Lasting Relevance (Perspectives Part 6)

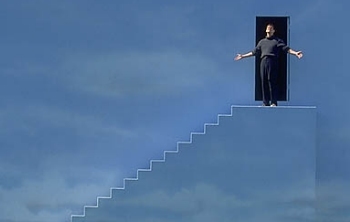

The Truman Show, directed by Peter Weir, is a 1998 science fiction comedy-drama starring Jim Carrey as Truman Burbank, an average twenty-nine-year-old insurance salesman who, unbeknownst to him, has been living his entire life on the set of a live television program called The Truman Show. Every detail of Truman's life, down to his career, friendships, and marriage, have been fabricated by the creator of the show, a man named Christof. The set of The Truman Show, an artificial community called Sea Haven, is equipped with over 5000 hidden cameras that monitor Truman's every move, and each citizen down to his 'best friend,' 'wife,' and even 'mother' are merely actors and actresses who have been hired to sustain the illusion that he leads and ordinary life. When props start to malfunction however, Truman begins to suspect the nature of his reality and eventually plans a successful escape.

On the surface, The Truman Show can be taken as an inspiring and relatively light-hearted story about overcoming society's constructs and seizing opportunities. I mean, especially considering Jim Carrey's reputation for goofy, tongue-in-cheek roles, these types of themes can be expected. The Truman Show also raises a number of deeply philosophical questions though, and when observed through the right lens, it speaks profoundly to the epistemological concepts of skepticism and knowledge.

Skepticism traces back thousands of years, but Descartes' Meditations on First Philosophy, published in 1641, was one of the first texts to clearly accentuate and delineate the idea that almost all things have reasonable cause for doubt. If you've been following my Perspectives series from the beginning, you might remember some of the concepts presented in Descartes' first two meditations. Almost two months ago I deconstructed and interpreted some of his conclusions, and if you missed that post (or if you want a refresher), you can follow this link to check it out.

So as you may recall, Descartes identifies his own mind as the only indisputable absolute truth of existence, and goes on to suggest that an evil genius, equally omnipotent as he is deceitful, may have made it his sole objective to deceive humankind by manipulating their perceptions and experiences. Descartes writes: "I shall consider that the heavens, the earth, colours, figures, sound, and all other external things are nought but the illusions and dreams of which this genius has availed himself in order to lay traps for my credulity." In acknowledging the possibility of the existence of such a being, Descartes introduces a reason to question and disbelieve every aspect of his life.

In terms of The Truman Show, Christof, who is the inventor and designer of the television program, is akin to the evil genius presented by Descartes' meditations. Outwardly this may not seem to be the case, because the film presents Christof as a godly character, both through his name which is a derivative of Christ, as well as through the camera angles which emphasize the way he sits above his creation and talks down to Truman from the sky. On watching The Truman Show with a more cynical perspective however, it becomes clear that Christof is analogous to Descartes’ deceiver rather than to God, because his whole life’s work involves manipulating Truman’s world in order to mislead and delude him for entertainment’s sake. The way Christof’s character is disguised as divine and saintlike on the surface may even be a reflection of the deceptive nature of Descartes’ evil genius.

Even though every aspect of Truman’s life is entirely contrived, he is nevertheless comfortable in it, at least during the beginning of the film before he begins to suspect any falsehood. This blissful unawareness is commensurate with Greek skeptic Pyrrho of Elis’ philosophy, called Pyrrhonism. Pyrrhonism is centered on acatalepsy, which in simplest terms is the inability to comprehend anything as it actually is, and while at first it may seem disencouraging, Pyrrho thought that the hypothesis that one could not understand anything in its entirety was ataraxia, which is a state of serenity characterized by a permanent freedom from distress. In other words, Pyrrho believed that his ignorance was bliss. The Truman Show’s take on ataraxia is summarized about two thirds into the film when Christof says: “we accept the reality of the world with which we’re presented. It’s as simple as that. If [Truman] was absolutely determined to discover the truth, there’s no way we could prevent him … Ultimately Truman prefers his cell.” Namely, Truman had every reason to be satisfied with his life because, fictitious as it was, he was safe, healthy and relatively well-off.

Despite his being set up for a fundamentally gratifying life however, Truman eventually becomes unhappy in his artificial world, and resolves to escape Sea Haven. His dissatisfaction stems from suspicion, confusion, and a lack of understanding which is evident because he is content at the beginning of the movie, and only when malfunctioning props, interfering actors, and glitches in the system give him cause for doubt does he become discontented with his existence. In this way, the nature of Truman’s search for truth is comparable to that of the prisoners in Plato’s Allegory of the Cave.

The Allegory of the Cave is a philosophical dialogue that was first presented in Plato’s Republic. Socrates, the narrator of the allegory tells of a group of prisoners who have spent all their lives chained to the back of a cave, facing a blank wall. Occasionally, objects pass in front of a fire behind them, and those objects’ shadows are cast on the wall they are facing. In this manner, the prisoners are taught that reality consists of nothing more than shadows and echoes. When a prisoner is released from the cave though, he perceives the true form of reality rather than the fabricated reality to which he had grown accustomed. Until he is released however, he has no reason to doubt that the shadows he perceives are anything other than the truth. Similarly, when the Truman Show’s system malfunctions, Truman’s eyes are opened to the possibility of something more, and only then is he able to escape his cave, so to speak, and experience reality for what it truly is.

And while Plato’s Allegory of the Cave perfectly illustrates Truman’s situation, it also applies to our own experiences, because one of the main concepts that Plato was trying to communicate with his allegory is that there are two worlds: the world of becoming (the material world) and the world of being (the immaterial world). Plato believed that it was wise to reject the unclear, misshapen forms of the world of becoming, and to embrace the pure forms of the world of being. If you replace the set of The Truman Show with Plato’s world of becoming, and replace the reality outside of Sea Haven with the world of being, then it becomes evident that in escaping the show, Truman was opening himself to a conversion from being deceived by material things to embracing the truth.

So although we may not be imprisoned in a complex television program in which all our experiences are contrived by an enterprising producer, there are ways in today’s society that we can be deceived. From politicians that lie in their campaigns to earn votes to companies that lie in their advertisements to earn money, if we want to open ourselves to truth, we must follow Truman in abandoning those things that make us vulnerable to manipulation. However deception may manifest itself, Truman Burbank represents a paradigm by which we must adhere if we want to detach ourselves from deception and metaphorically release ourselves from the cave wall we are chained to.

And that's why The Truman Show holds lasting relevance.